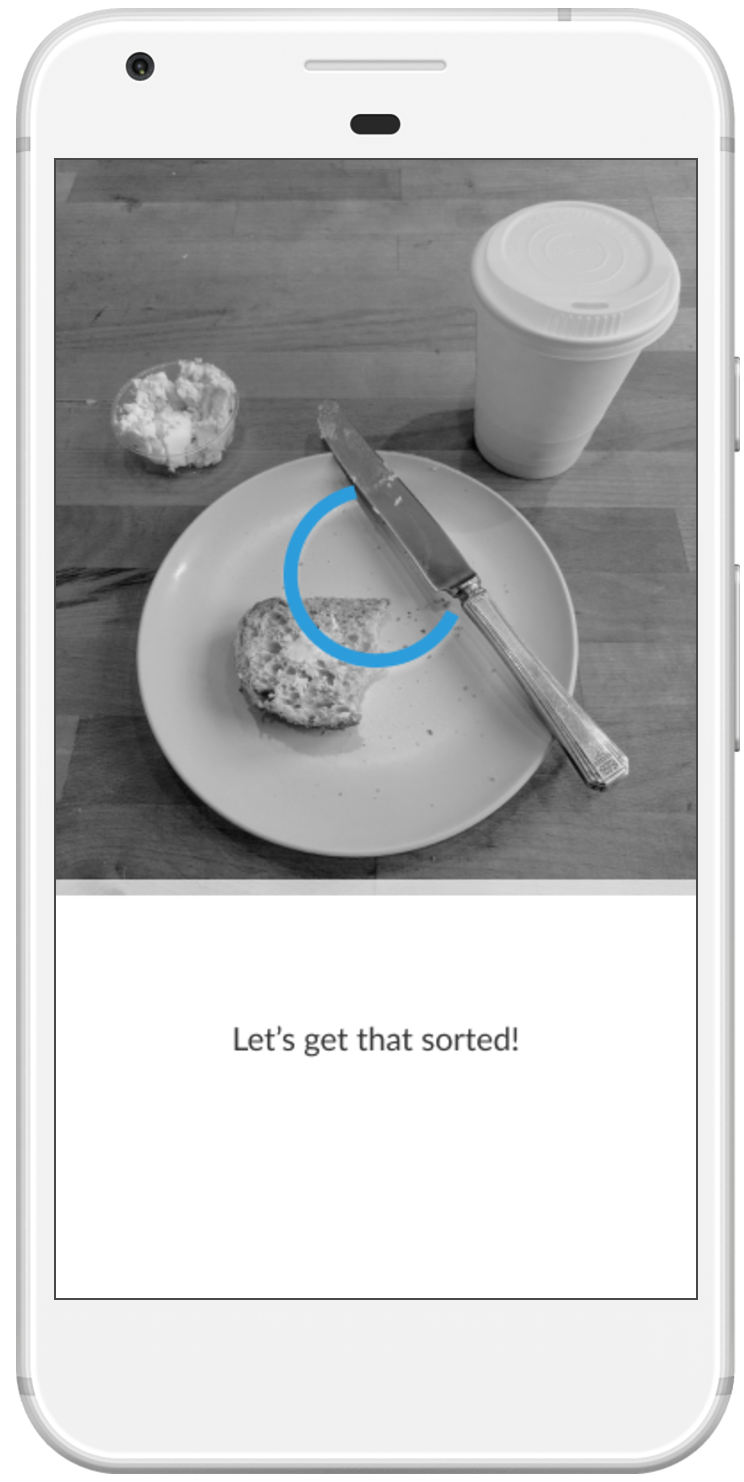

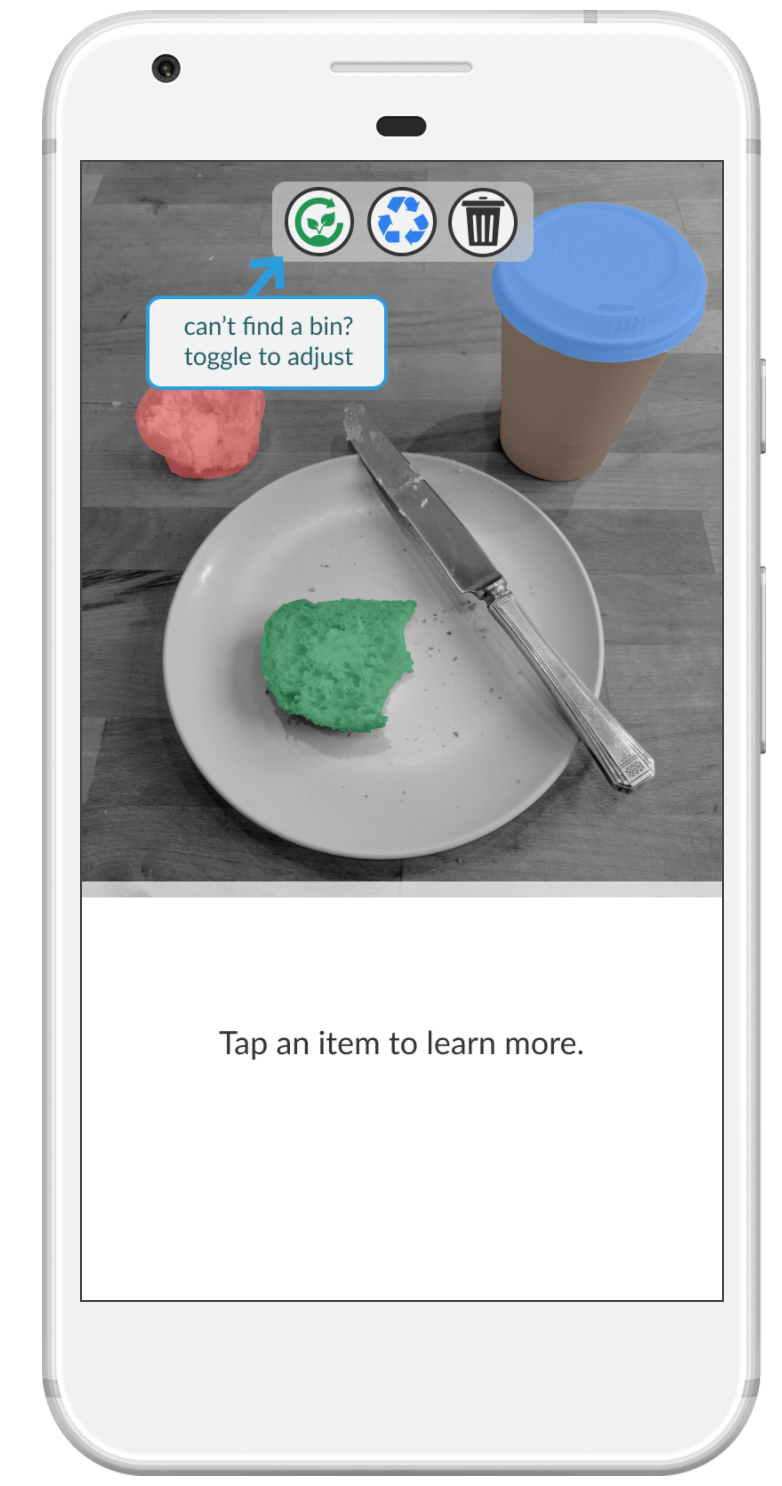

Clairity is the result of a collaboration with Cameron Gilbert. This prototype explores the use of machine vision to help consumers sort items for disposal. The application allows a user to photograph items for disposal and then highlights each with its proper channel.

The Process

Project Timeline: 2 weeks

Cameron developed the concept for this application from his previous work interviewing dumpster dippers. Together, we explored potential solutions in this space and prototyped the experience above.

Early Protoype - Is it worth building?! Would people use it?

I designed an initial prototype to examine a few key design questions:

- Are users willing to photograph their refuse?

- When will users turn to an app? (Every disposal to track life impact and build up incentives? Only when unsure of action?)

To build our understanding of use case, we provided users with a phone number to text photographs of items they were going to dispose. We then provided an incentive (a seed planted) for participation as well as the proper disposal methods based on their location and photograph as though we were a bot.

Users felt strongly this would be something they would turn to in times of uncertainty rather than something they would participate in everyday.

“It made me feel good that you’re planting a seed. … I already know what’s compostable and recyclable - I feel like that was 2 years ago... Taking a picture feels like an extra step, it already makes me feel good when I sort things” - Andrea, 25

Feedback pointed to our key benefit being a quick response in times of uncertainty. One user even stated she "felt cared for." This helped us focus on our basic flow:

Expert Interview

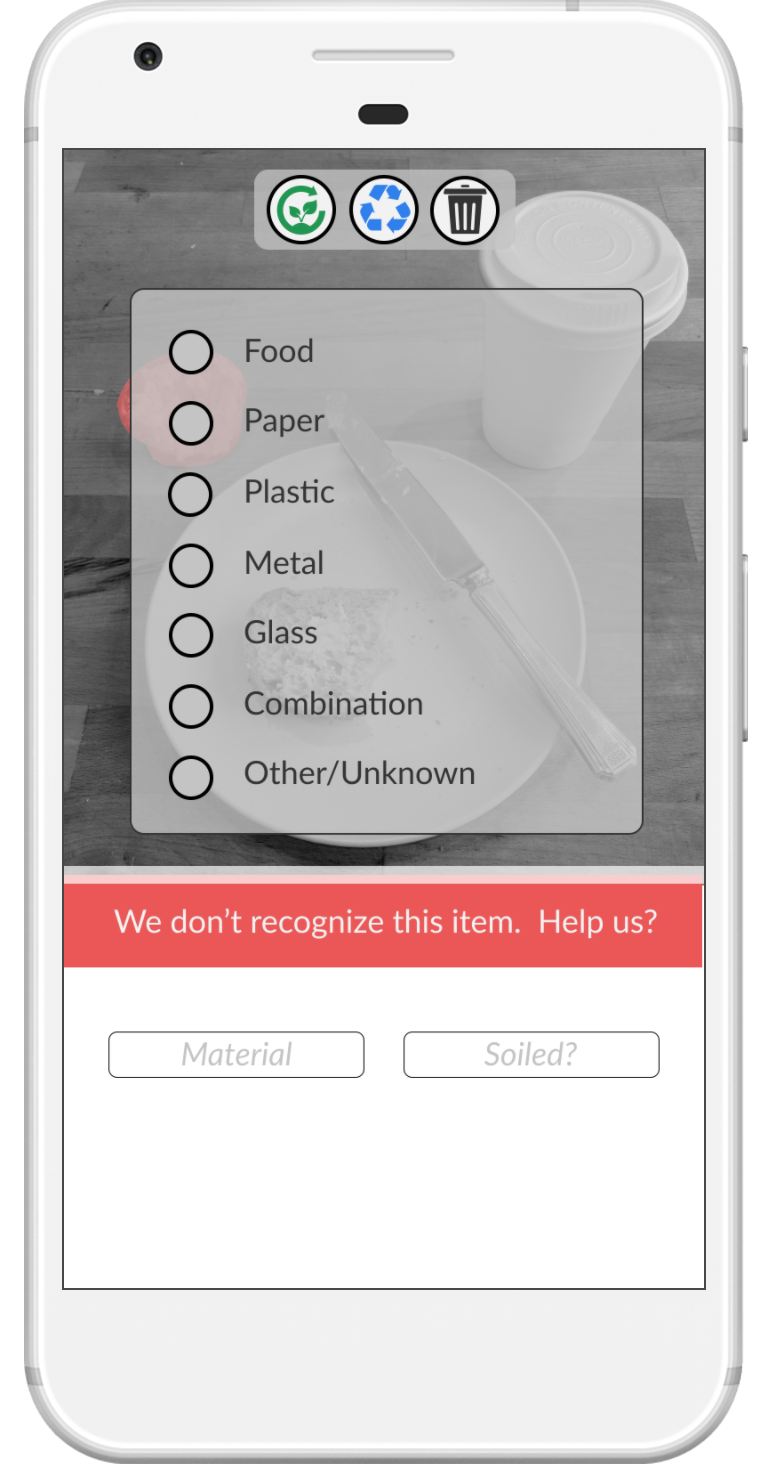

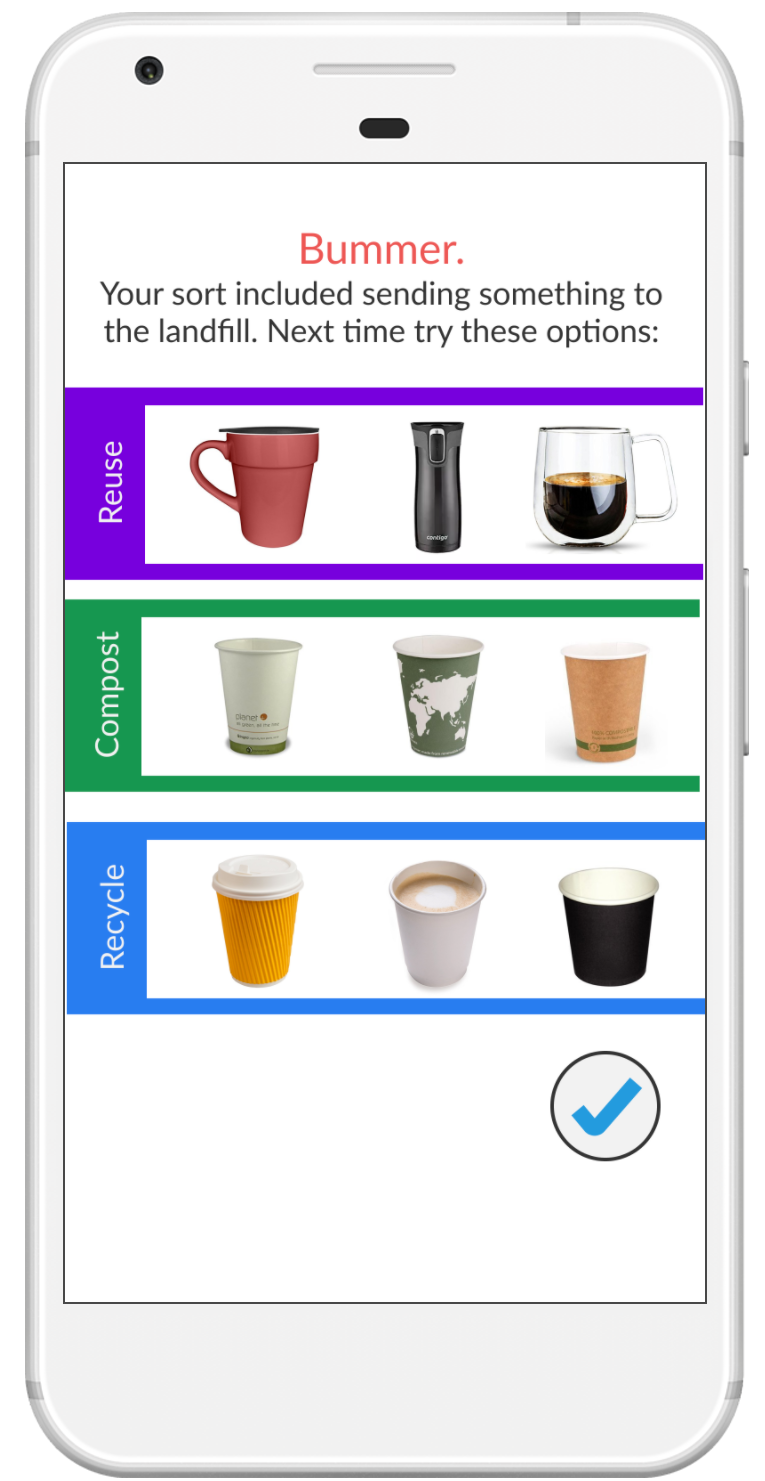

Simultaneous to our early prototypes, we we reached out to Stanford's waste management office and learned about the biggest challenges in diverting waste from landfills. Many issues arise from lack of understanding the process (i.e. greasy pizza boxes can't be recycled because they require too much detergent to clean them out! It's much better to compost them!). It became clear we needed to include some level of information to help people improve their disposal skills overtime.

Digital Prototype

Cameron and I built the idea into our initial Marvel digital prototype (Prototype for Android.). We created the flow together, being sure to include opportunities for users to help inform the machine learning (see "we don't recognize this item" screen). Cameron created an animation that would show where/why items ended up where they did. I created the visual language and most of the initial sorting screens.

We tested this prototype with a few users and identified opportunities for improvement in future iterations - including providing a clearer way from the sort out of the application.